Tags: programming

<< previousnext >>If you've ever taken a computer science class, then you've probably heard of two's complement. What you might have been told is that it's a system for representing positive and negative numbers in computers, and that to convert a number to two's complement form, you invert all the bits and add 1.

"Okay," you may have thought to yourself on hearing this information, unconvinced, "but doesn't flipping the bits and adding 1 seem kinda arbitrary? And what's the POINT of two's complement?"

These are the questions I will attempt to briefly answer.

Here's an example to reacquaint ourselves with two's complement. Let's say you have a decimal number, . The little 'd' stands for decimal. Assuming you have 4 bits, is in binary (where the little 'b' stands for binary). To convert this number to two's complement, you change the 0s to 1s and the 1s to 0s, giving , and then you add 1, giving . The number is treated as in the two's complement system.

The POINT of two's complement, or at least one of the points, is that it allows you to take the same circuitry with which you add, multiply and subtract positive numbers, and use it to add, multiply and subtract negative numbers. This means you don't need complicated circuitry just for negative numbers.

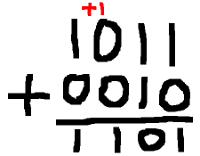

Let's explore why negative numbers might require complicated circuitry. Remember how they taught you to add numbers digit-by-digit in school? Computers add numbers in pretty much the same way. They go through the digits from least significant (rightmost) to most significant (leftmost) and add them up individually. If the sum of two digits is too big to store in a single digit, then you have to carry a 1 over to the next digit, as shown in the example below.

This algorithm is easy to model with logic gates because the addition of two digits depends only on the addition of the previous two digits (and whether there was a carry). It doesn't have to look 10 digits ahead, or 10 digits behind. Just 1 behind. For this reason, the gates can be chained together sequentially, making the circuit straightforward and efficient.

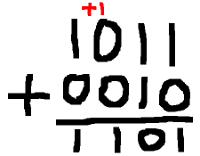

The subtraction algorithm you learned, on the other hand, is a pain in the ass. If you'll recall, it proceeds from right to left, like addition does. But if the negative digit is larger than the positive digit, you have to look arbitrarily far ahead in order to "borrow" from a more significant positive digit.

This means that the logic gates that perform subtraction for the rightmost bit have to be connected to the leftmost bit somehow -- and all the other bits, too! The resulting circuit will be a tangled mess, like a big ball of hair. Also, if you have an explicit flag that says whether a number is positive or negative, then you need logic to handle updates to this flag, too.

But what if... WHAT IF, my friend, you could use the straightforward addition algorithm, but for negative numbers? And what if I told you, my dear friend, that two's complement allows you to do this! (Please be my friend). It allows you to treat negative numbers as if they were regular, boring old positive numbers, and avoid the ball of hair.

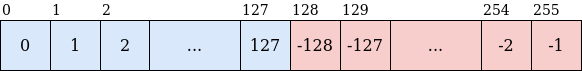

How exactly does two's complement work? Forget about the bit-twiddling for now. Let's say you have 8 bits, which can take on possible values (indexed from 0 to 255). In the two's complement system, the first half of the numbers in this range, 0 to 127, are their usual positive selves. +0 is mapped to +0, and +127 is mapped to +127.

Here's the tricky part: the next half of the numbers, 128 to 255, are mapped from -128 to -1. This is like saying that the negative counterpart of a number , i.e. its two's complement, is .

FOR EXAMPLE. The two's complement forms of 0, 1 and 127 (which you can check in the number line above) are:

A reminder: addition of -bit numbers has to be done modulo , because only numbers can be represented. If we add 2 -bit numbers together and they exceed , they roll around to 0 and we count from there. So, is , which, modulo (or mod) , is .

What happens when we add a number and its two's complement form? We get 0, just as if we subtracted the number from itself!

I hope this fact goes some of the way to convincing you that two's complement is a sensible system. That we can take the two's complement of a number, and it will behave like the negative of that number when we add it to something.

Let's say we have bits, rather than focusing on the 8-bit case specifically. We can now say more generally that, given two numbers and ,

Adding the two's complement form of a number is like subtracting it, under modulo!

Here are some more facts about two's complement.

Okay. There are two details about two's complement left to discuss. The first detail is the big wart on its backside. The second detail is the bit-twiddling that was used to introduce you to two's complement all those years ago.

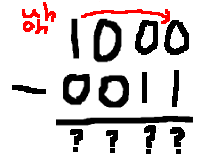

The wart on two's complement's bottom is that, as you may have noticed, there's no +128 to match -128 in our 8-bit number line! The two's complement operation applied to 128 gives . That is, the two's complement of 128 is itself. There's no space left to represent both +128 and -128, so we have to map the value 128 to only one of them. I'm not sure why, but the convention is to pick -128. Some things still work as expected: , i.e. adding 1 to -128 still gives -127. But when we try to negate -128, we get: . -128 can't be negated!

This can be the source of nightmarish bugs. And, since all modern computers use two's complement (if I recall correctly, this fact is going to be enshrined in the C standard), you can't avoid it. If you compile and run the following C code on your computer, it will most probably print -128.

#include <stdio.h>

int main() {

char n = -128;

n = -n;

printf("%d\n", n);

}

And now, here it is. The moment that nobody has been waiting for. Where does the bit-twiddling come from? We have described the two's complement form as , so why is it introduced using bit flips and rogue +1's? I'm not sure why it's introduced in such a confusing way, but the bit-twiddling magic is just a trick to compute without performing subtraction or needing to store anywhere (which would require bits). This is easiest to demonstrate with an example. Let's say you have 4 bits, and you want to compute the two's complement of (using our notation for decimal and binary numbers from before). The two's complement form is

We split into and . Subtracting from is equivalent to flipping all the bits in (the same applies for any 4-bit number). Then we add 1. That's where the magic comes from.

I'd be happy to hear from you at galligankevinp@gmail.com.